By Reiko Take and Lavinia Tyrrel

Spent last Friday in the kick-off workshop looking at how to get aid organisations to make better use of evidence and research in development policy and programming.

This is all part of an action research project funded by the Research for Development Impact (RDI) Network and implemented by La Trobe University (LTU) and Praxis Consulting. In a nutshell, the project brings together 13 aid organisations and universities (think DFAT through to Oxfam) to unpack what the barriers and opportunities are to using evidence and research in aid and development.

The “action” bit means that we (the 13 organisations) are both the ‘subjects’ of this research as well as the ‘do-ers’.

La Trobe, RDI and Praxis give us support to go off and try to change how our organisations use research, and in return they get to ‘study’ us and our organisations (willing lab-rat does spring to mind).

RDI and LTU want to know what works, what doesn’t and why when trying to raise the profile and use of evidence and research in designing, implementing and reviewing aid programs and policy.

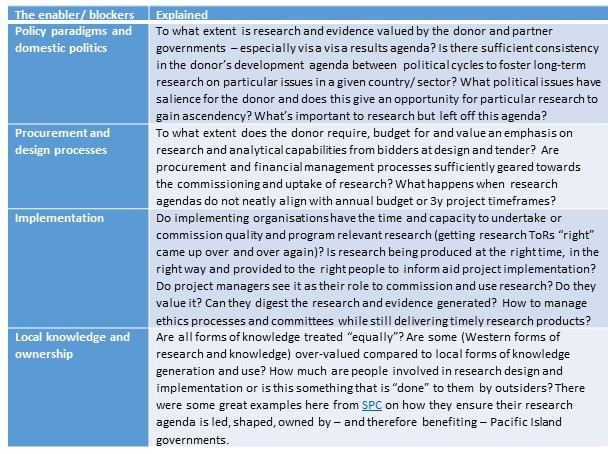

What struck us most was that we all – NGO, government, private sector, university – identified a broadly similar set of factors across the aid industry which could promote or inhibit the use of research and analysis in our own organisations. Including:

Of course, in addition to this – each organisation spent time navel gazing to understand the unique political economy of our own organisations. The factors (structural, institutional, individual or collective) that make it harder or easier for us to use research.

Unsurprisingly leadership and collective action were consistent themes here – both as a force for good or as a blocker. As were incentives. On the latter, there was good discussion amongst the consultancy group and some NGOs on the tension between cost recovery vs the intrinsic value of research for more effective aid (the social good side of consultancies), and amongst the universities about the push for academics to publish on topics they or the university might be interested in vs researching issues that matter to people in developing countries or policy makers who fund things.

Finally, on prospects for change to the status quo?

Hard to know. We certainly ended the day on a more optimistic note than we began. At the start of the day each group mapped their research barriers on Lisa’s institutional iceberg (drawing on Matt Andrews and others. Below the iceberg = informal institutions and norms that are hard to shift, above = formal institutions, policies, laws that are hard to shift but easier to at least see) – everyone put almost everything below the line. Rather sobering. See below.

However, and rather surprisingly – by the end of the day each organisation had come up with an ambitious list of actions they wanted to take forward in their own company. From lobbying Vice Chancellors through to writing research into bids and establishing research libraries and communities of practice. Or as one revolutionary put it, they plan to “hijack new policy opportunities for change”.

So will we all see through our commitments?

Who knows. Jury is still out. But we will get back to you in 6-8 months’ time…

Thanks for the article Reiko Take and Lavinia Tyrrel. Looking forward to how the action research dialogue integrates capturing the cross cutting governance and humanitarian issue of mitigation of modern slavery; in particular including capture of aid sector impacts and the socially responsible business knowledge, actions and impacts.

LikeLike

Pingback: Research in international development: bridging the gap between production and use - Devpolicy Blog from the Development Policy Centre